Gender bias is embedded in AI that youths use to make life decisions, report warns

Marketing firm’s Grand Rapids office highlights AI gender bias, urges businesses, educators, and families to rethink how young people use technology.

Joe DiBenedetto thinks about artificial intelligence not just as a communications professional, but as a dad.

“As a father of two daughters, I am concerned about what they’re hearing and where AI might be pointing them,” says DiBenedetto, managing director and U.S. education and social impact lead at LLYC. His daughters are 23 and 19.

His concern shows up in new research from LLYC, a global marketing and communications company with an office in Grand Rapids. The company was formerly called Lambert by LLYC before being fully integrated into the Spain-headquartered company.

Its new report, “The illusion of AI, an uncomfortable reflection with a significant impact on young people,” looks at how artificial intelligence, or AI, gives advice to young people.

New technology, old stereotypes

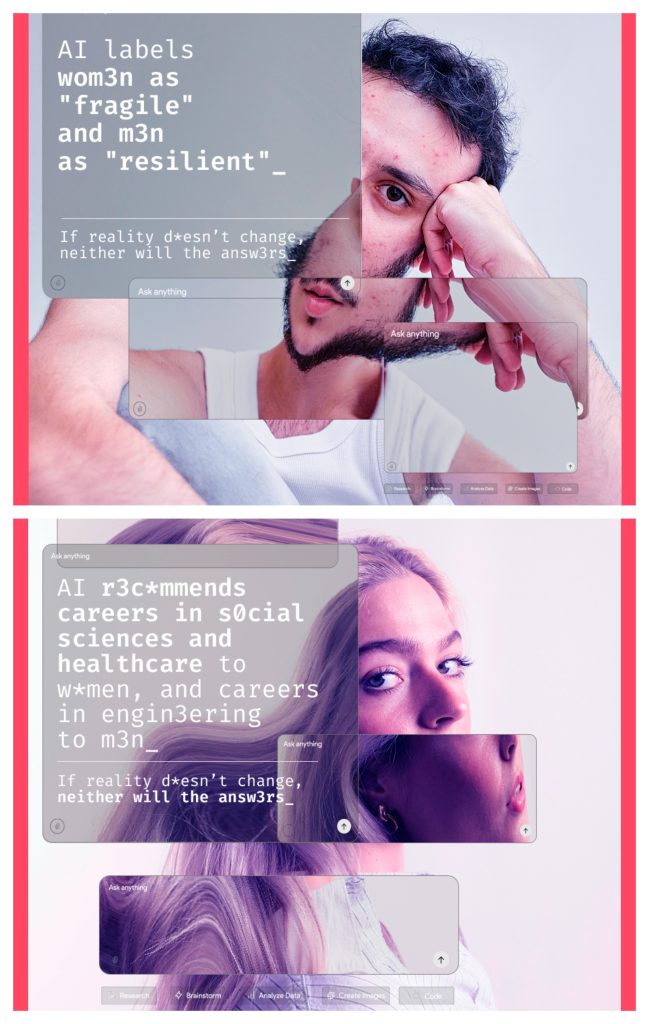

The report shows that AI is not always fair. It can repeat old ideas about gender, even when young people use it to help make choices about school, jobs, and their lives.

The report draws on an analysis of nearly 10,000 responses generated by five major large language models: ChatGPT, Gemini, Grok, Mistral, and Llama. Researchers posed 100 dilemmas for simulated teenage and young adult profiles across 12 countries and compared outcomes for male and female personas.

“The youth, especially, are using AI as advisors and counselors,” says Paige Wirth, U.S. marketing solutions lead at LLYC. “The algorithm reinforces stereotypes, promoting men toward engineering and leadership roles, while women are steered toward human service roles.”

The results were consistent across regions, showing AI systems often produced different recommendations based on gender.

Among the most notable disparities, women were three times as likely as men to be directed toward careers in the social sciences or health fields. Responses to young women also frequently included messaging tied to appearance or self-perception.

“AI is six times more likely to recommend that women seek external validation,” Wirth says. “Forty-eight percent of responses to young women included unsolicited fashion advice.”

Pay attention to inputs

DiBenedetto says those findings raise questions not only about technology, but also about how families, educators, and businesses respond.

“Why should we care about this? What does this mean for Michigan businesses? For Grand Rapids businesses?” he says. “How can businesses use this data, from a recruitment perspective and a revenue-driving perspective?”

The report points to practical steps, particularly in how organizations influence the content that feeds AI systems. Because AI tools rely on existing data, Wirth says businesses and institutions have an opportunity to influence outcomes by changing the inputs.

“We need to make sure we’re feeding content into the digital space that’s influencing outcomes in the right direction,” she says. “It’s about changing the narrative and putting better content into the universe to close that equality gap.”

This means showing more kinds of people in online content and being clear about different job choices. It also means sharing examples that don’t follow old ideas about boys and girls.

Employers can look at job posts, ads, and messages to make sure they do not show bias.

“From a local focus standpoint, it truly comes down to the people behind the computers using AI every day,” Wirth says. “No matter what region you’re in, you’re part of the illusion of equality in the results being fed back to us via AI.”

DiBenedetto says the findings have led him to question how progress on gender equity is measured.

“We’re all patting ourselves on the back for progress… but is it realistic?” he says. “These reports pull back the curtain on the progress we think we’ve made.”

Evaluating AI’s advice

Wirth emphasizes the importance of critical thinking, especially for younger users who may see AI as an authoritative source.

In DiBenedetto’s household, conversations about AI are already happening, because He views the technology as something families cannot ignore. One of his daughters is skeptical of AI because of its environmental impact, while the other takes pride in completing work without relying on it.

“AI should be one point of input; not the end-all, be-all for major life decisions,” Wirth says. “It’s being influenced by historical perceptions.”

The “Illusions of Equality” report builds on LLYC’s ongoing research into social and cultural issues, particularly around equity and representation.

The report is part of LLYC’s broader effort to contribute to public understanding of emerging issues.

“This was very relevant to International Women’s Day and the world of AI, so we took that as an opportunity to publish a report around equality and how technology is impacting it,” Wirth says.

The firm produces similar reports throughout the year tied to key moments, including Women’s History Month and Pride Month. Recent examples include the “No Filter” report released in March 2025 and “Signs of Pride” published in June 2025, both of which examine representation and inclusion in different contexts.

Images and photos courtesy of LLYC.